#

Usecase: Automating Auto Insurance Claim Processing with IBM Watsonx Orchestrate and IBM Watsonx.ai on Phoeniqs Vela

#

Introduction

In collaboration with Phoeniqs, IBM has successfully completed a project demonstrating the integration of Watsonx Orchestrate (WxO) running in the cloud with Watsonx.ai foundation models on-premises (Vela). The project aimed to validate the technical feasibility of this hybrid architecture while highlighting its application in the insurance industry.

In this white paper, we will walk through a use case where an insurance case officer processes multiple auto insurance claims daily. Through the integration of Watsonx Orchestrate with Watsonx.ai, we demonstrate how this solution automates claim processing tasks, improving efficiency, reducing operational costs, and enhancing overall productivity.

The project’s objectives included:

- Conducting technical feasibility testing for cloud-on-prem integration.

- Overcoming integration challenges.

- Developing and using OpenAPI specifications.

- Creating WxO skills from OpenAPI specs.

- Designing an end-to-end architecture.

By utilizing a mock insurance use case, we showcase how Watsonx Orchestrate simplifies complex workflows for the insurance industry, leveraging foundation models for automation.

#

Overview of Watsonx Orchestrate, Watsonx.ai, and Vela

- Watsonx Orchestrate (WxO): An AI-powered orchestration platform that streamlines task automation by enabling business users to run workflows and manage processes through AI-driven skills. WxO enables users to interact through a chat interface or an AI studio (Watsonx Assistant interface) and automates decision-making, task handling, and communication workflows.

- Watsonx.ai: A suite of large language models (LLMs) designed to process natural language and perform complex tasks such as summarization, named entity recognition (NER), and recommendation generation. Watsonx.ai models can be deployed on-premises or in the cloud, providing organizations with flexibility in deploying their AI workloads.

- Vela: The on-prem version of Watsonx.ai, installed on Red Hat OpenShift, providing foundation models with capabilities for businesses needing data sovereignty and in-house AI solutions.

#

Use Case Overview

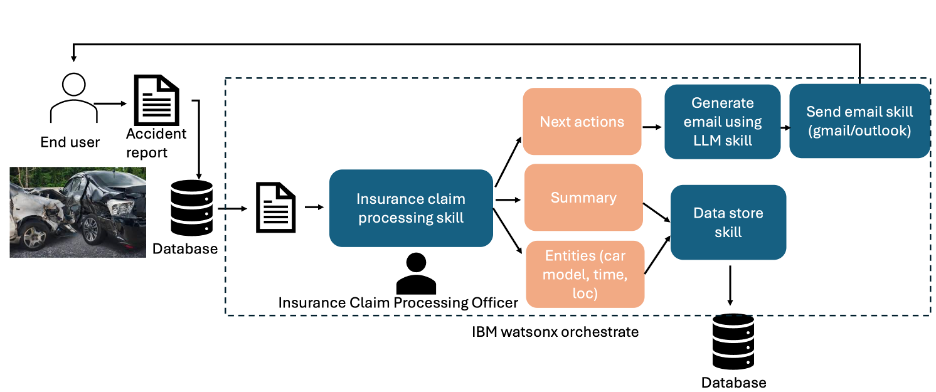

The insurance industry faces the challenge of managing and processing large volumes of claims daily. In this use case, the insurance case officer receives numerous auto insurance claims, each requiring extraction of specific information, report summarization, and communication of next steps to the claimant.

IBM Watsonx Orchestrate integrates with Watsonx.ai to automate these tasks using foundation models. The end-to-end workflow is executed with minimal human intervention, significantly reducing processing time and costs while improving claim accuracy.

#

Workflow Description

- Claim Report Submission: Claim reports are submitted to the insurance company and stored in a central database.

- WxO Dashboard Interaction: The insurance case officer logs into the Watsonx Orchestrate dashboard and retrieves the list of new claims. From here, they initiate a skill flow by clicking on the "Process Insurance Claim" skill.

- Skill Flow Execution:

- The "Process Insurance Claim" skill retrieves the claim report from the database.

- It triggers a series of tasks including:

- Named Entity Recognition (NER): Identifies key information such as the car model involved in the accident, accident date, time, and location.

- Report Summarization: Condenses the claim report into a concise summary for easy understanding.

- Action Recommendation: Suggests next steps for the claimant based on the details of the claim.

- Automated Communication: After processing the claim, a secondary skill "Send Email" is triggered, which composes an email to the claimant. The email includes a summary of the incident, recommended next steps, and any necessary documents. Finally, the system sends the email automatically. For this project, we used a Gmail skill integration. However other mailing services such as Outlook can also be integrated as required.

#

Technical Details

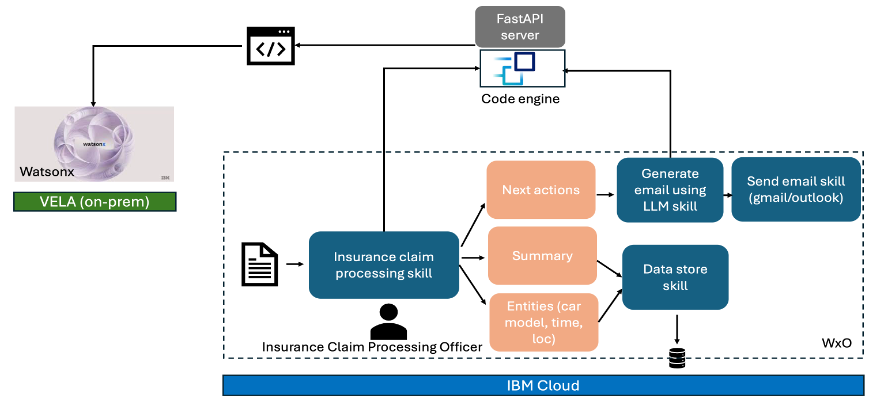

- OpenAPI Specification: Each skill in Watsonx Orchestrate is created based on an OpenAPI spec that defines the structure of APIs. In this project, the OpenAPI spec outlined the endpoints and required inputs for processing insurance claims.

- API Communication via IBM Code Engine: Watsonx Orchestrate calls an API hosted on IBM Code Engine, where a FastAPI server handles the requests. The FastAPI server uses secure authentication to interact with Watsonx.ai endpoints, leveraging foundation models to complete tasks like NER, summarization, and recommendations. The input to the models is the insurance claim report, and each task is mapped to a specific model.

- LLM Integration and Response Handling: The FastAPI server routes the claim report to the appropriate Watsonx.ai LLM endpoint. The model processes the input and returns a JSON response containing the results. The results are then sent back to Watsonx Orchestrate, which collates the information and presents it to the insurance case officer or triggers the next task in the workflow.

- Scalability with IBM Code Engine: The solution is designed to scale based on the workload, with IBM Code Engine automatically adjusting the number of instances depending on the load. This ensures that the system remains efficient and responsive even as the number of claims processed increases.

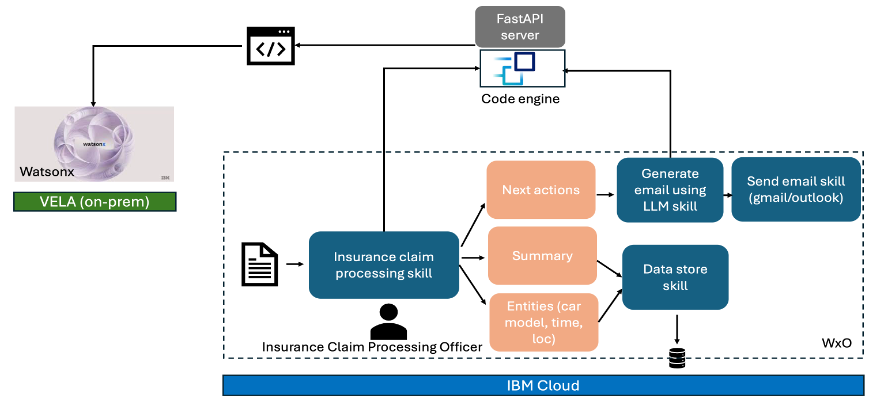

Hybrid Cloud/Multi Cloud Architecture: Although the current implementation uses IBM Code Engine and FastAPI, the architecture is adaptable to other cloud providers:

- For AWS, the IBM Code Engine component could be replaced with AWS Fargate or AWS Lambda.

- For Azure, Azure Container Apps could serve as the alternative platform for hosting the FastAPI server.

This flexibility allows organizations to choose the cloud provider that best suits their operational requirements. Figure 1 shows the schematic diagram of the workflow.

Figure 2 shows the architecture using watsonx.ai installed on-prem with Phoeniqs Vela and IBM Code Engine and Watsonx Orchestrate.

The e2e architecture is flexible and adaptive which can be adapted to other clouds such as AWS, Azure depending on where Watsonx Orchestrate is installed (Figure 3).

Depending on the cloud provider, container engine (serverless) can be chosen. For example, if Watsonx Orchestrate is installed on AWS cloud, FastAPI server containing the LLM pipelines can be containerized and can be deployed on AWS Fargate or Lambda. Likewise, if it is Azure, it can be deployed on Azure container apps. The API can also be deployed on Vela in Phoeniqs environment. Irrespective of the container engine used, the OpenAPI spec will remain same except the server URL, which depends on the platform where the FastAPI server is hosted.

This way, it abstracts away the complexity and makes the architecture quite flexible and adaptive. Business users interacting with Watsonx Orchestrate does not need to be worried about how the LLM pipeline is built and how it is deployed. On the other hand, developers or AI Engineers can update the source code, containerize and redeploy the application without changing the endpoint. This way the architecture also supports CI/CD in an effective way.

Project Outcomes and Benefits: The integration of Watsonx Orchestrate with Watsonx.ai on-prem demonstrated significant advantages for the insurance industry:

- Reduced Processing Time: Tasks such as claim report processing, information extraction, and communication were automated, freeing up time for insurance officers to focus on higher-level decision-making.

- Increased Accuracy: Foundation models processed the claims consistently, minimizing the risk of human error during data extraction and recommendation generation.

- Operational Cost Savings: By automating routine tasks, the project showcased how insurance companies can reduce operational costs without sacrificing service quality.

- Scalability and Efficiency: The use of IBM Code Engine ensured that the system could scale based on demand, optimizing resource utilization.

Code Base and OpenAPI spec: The complete code base and the sample OpenAPI spec (in json format) can be found on GitHub as follows:

- https://github.ibm.com/Rahul-Deb-Das/Insurance-Claim-Orchestrator-using-IBM-Watsonx-Orchestrate

- https://github.com/rddspatial/Insurance-Claim-Orchestrator-using-IBM-Watsonx-Orchestrate/

P.S.: Watsonx Orchestrate chat interface expects the API endpoint without any trailing slashes.

#

How to Connect to Phoeniqs Vela platform and get the credentials:

See

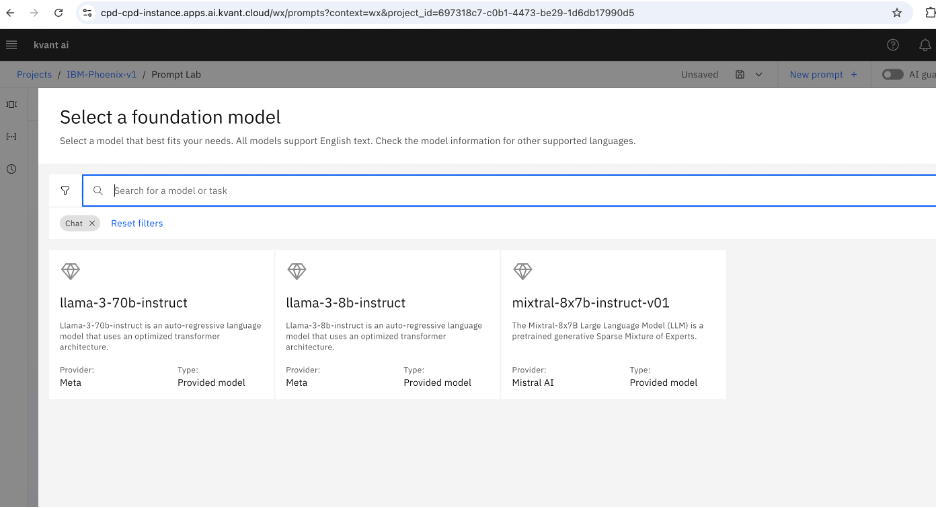

andOnce user sign up and login to the Phoeniqs portal, they can create a project and choose various foundation models or large language models (LLM) for performing their tasks (Figure 4).

Conclusion: IBM’s Watsonx Orchestrate and Watsonx.ai provide a powerful, flexible solution for automating complex workflows in the insurance industry. This project demonstrated the seamless integration of on-prem and cloud-based AI solutions, highlighting the potential for significant productivity gains, cost reductions, and process optimization.